Defenders know that the quicker you can detect an attack, the quicker you can respond, which allots less time for attackers to accomplish their goals. To that end, one of the most powerful features in the QOMPLX Q:CYBER platform is the extensible streaming rules engine. We use streaming analytics for a variety of purposes in the Q:OS platform including detecting malicious activity on the network.

In this blog series we’re going to show you how to easily ingest Windows Event Logs (WEL) into Q:CYBER, create basic detections based on those logs, and go into more advanced rule creation using standard rules and time windowed rule evaluations. Don’t worry, it is all much easier than you may think and it all happens in-memory long before reaching a SIEM tool or database.

Log Ingest Architecture

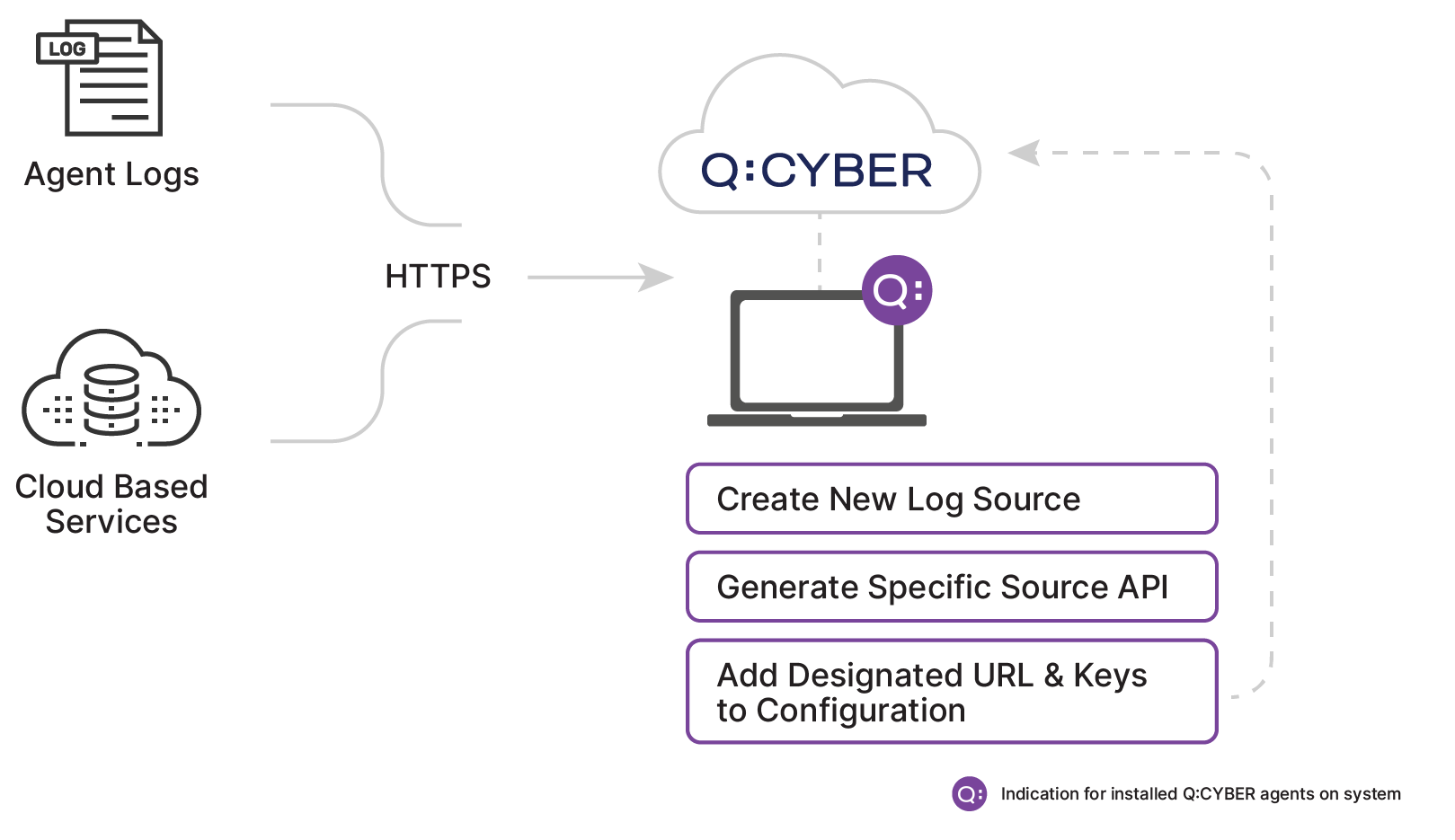

Q:CYBER is designed to ingest any log format with pre-built utilities and parsers which cover ingestion from a variety of common sources. In the diagrams below you can see that there are two possible paths to stream logs to the platform.

Regardless of which path you choose, all logs converge into our steaming analytics engine. The most straightforward approach is to forward logs over HTTPS directly to the Q:CYBER platform. (This method works just as well with cloud based services such as AWS Cloudtrail or from on premise log servers that can support this transport method.)

When you create a new log source in Q:CYBER the platform exposes an end point and generates source specific API keys for authorization. Depending on the specific method of forwarding the logs you select, it is usually just a matter of adding the destination URL and keys to the configuration file to get logs flowing into the cloud-native solution.

In this post we walk you through setting up NXLog Community Edition to forward WELs from a Windows server, but the platform supports output from any source with a supported format such as syslog-ng and even allows for ingesting directly from Splunk Universal Forwarders or Heavy Forwarders.

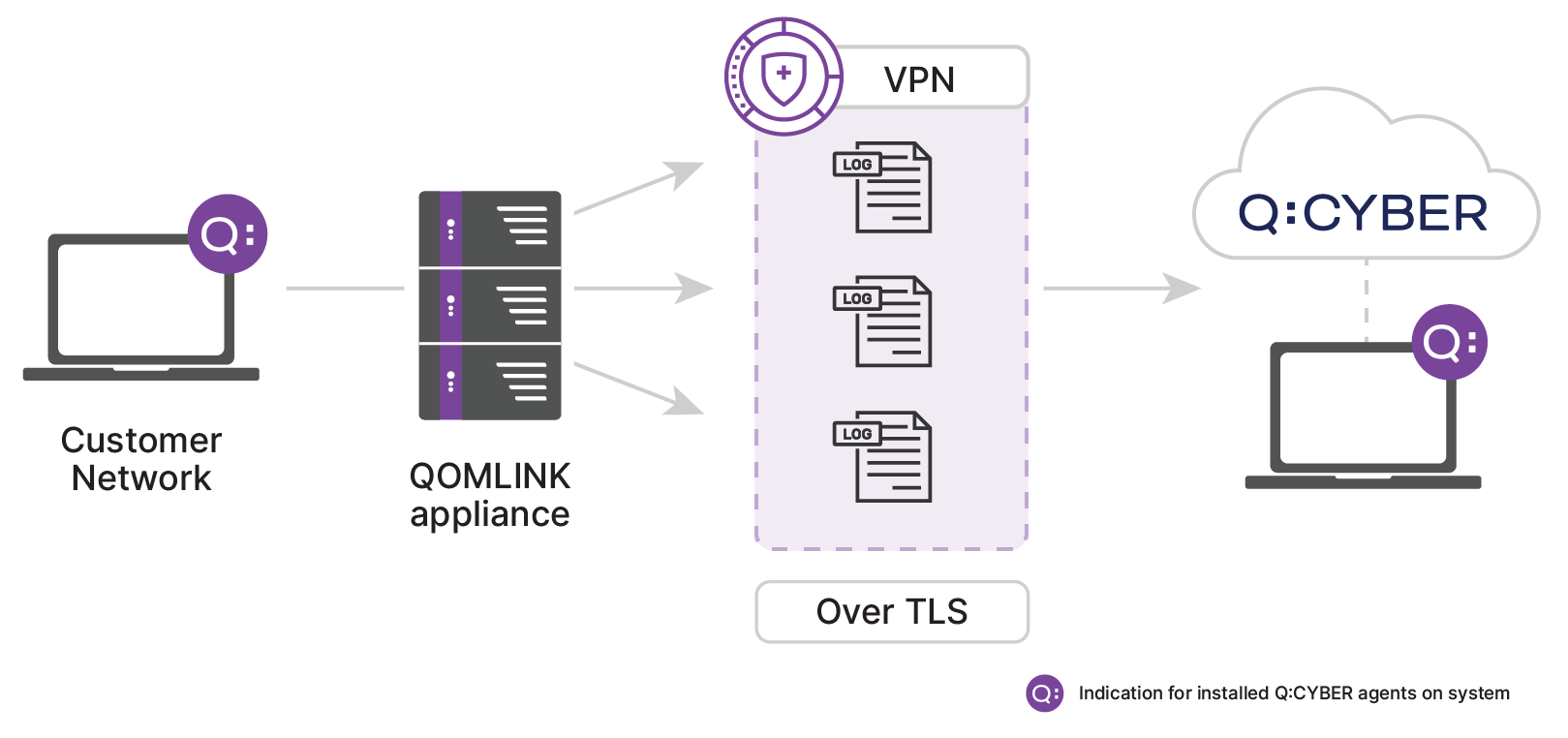

The QOMLINK Virtual Appliance

Q:CYBER has an additional log ingest option for non-https capable log emitters, since not all logs can be easily sent directly over the public Internet. Even today there are still log sources that do not support outputting logs over HTTPS. For these types of sources it’s not a good practice to send logs in the clear as sensitive data could be exposed. For sources that do not support HTTPS the second path is through our QOMLINK Virtual Appliance (QLA). QLA’s act as a data consolidation point deployed within the customer network to facilitate secure transport of on-premise data to Q:CYBER in the cloud.

With a QLA deployed you can point virtually any log source at it and it will securely transport the data downstream for handling. This means log sources can be sent over TCP or UDP with little effort once a QLA is configured.

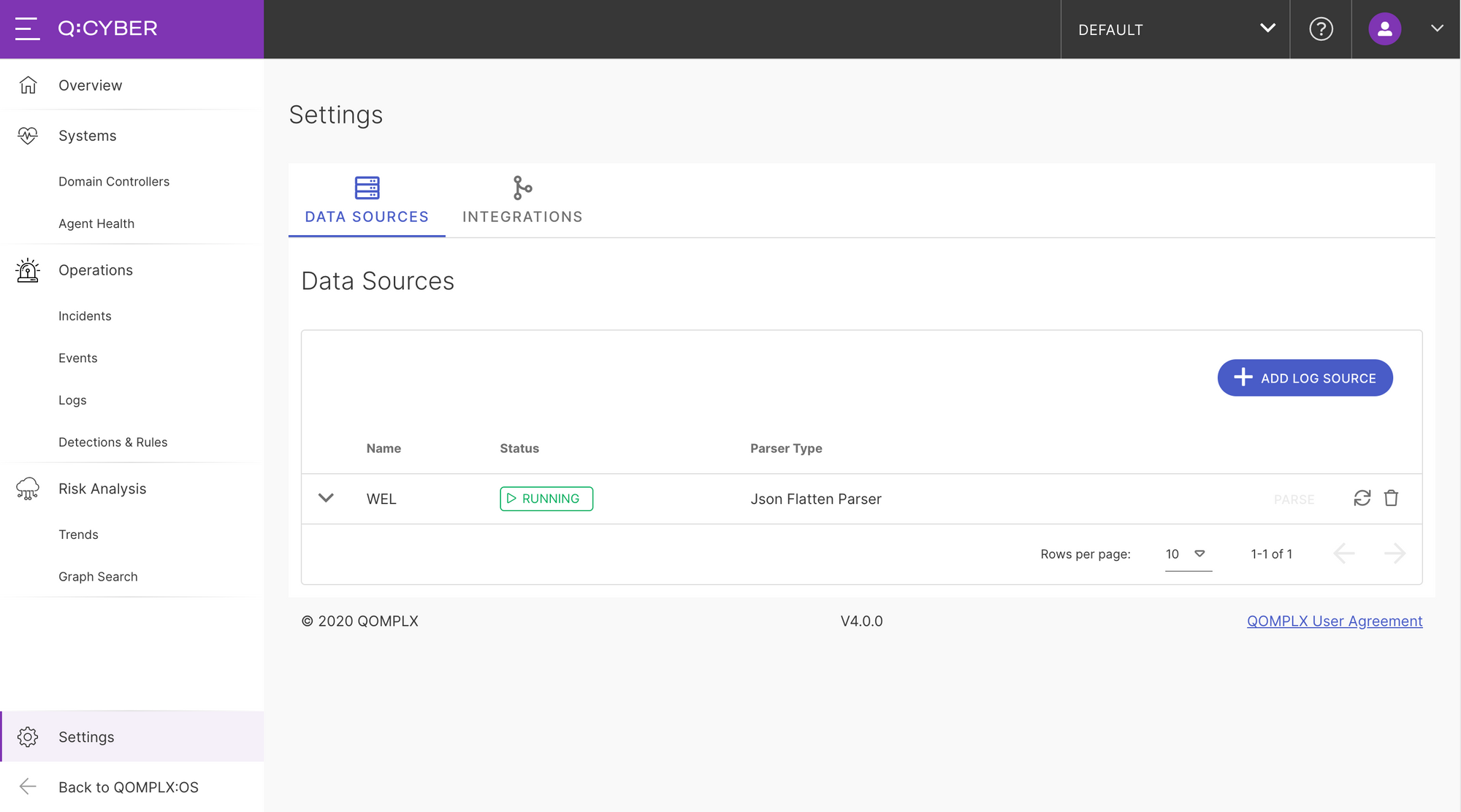

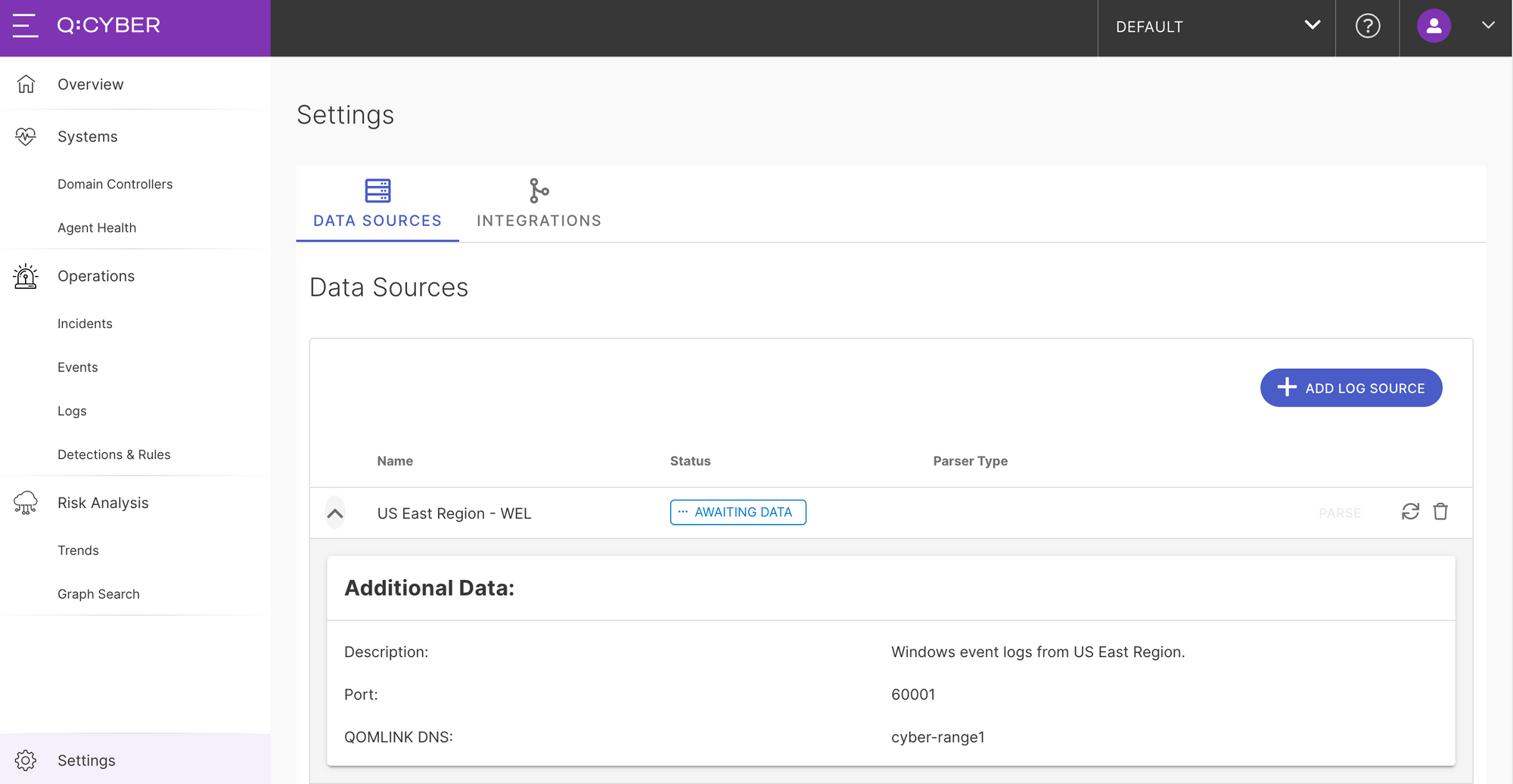

Setting Up A New Data Source

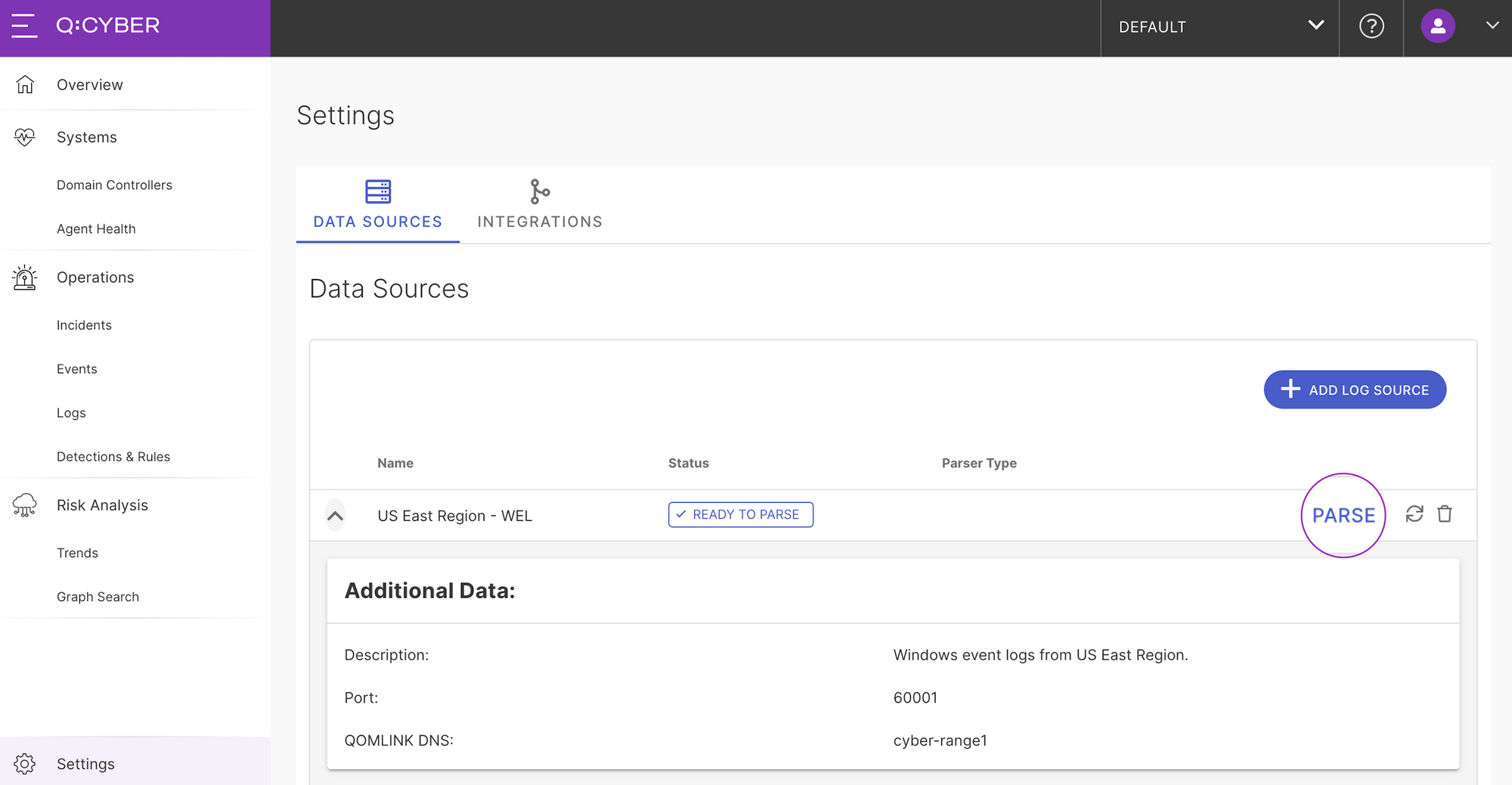

Ingesting a new log source takes only a few minutes to set up in Q:CYBER. After logging in, navigate to the “Data Sources” setup page in settings. On this page you can see any existing data sources that are previously setup.

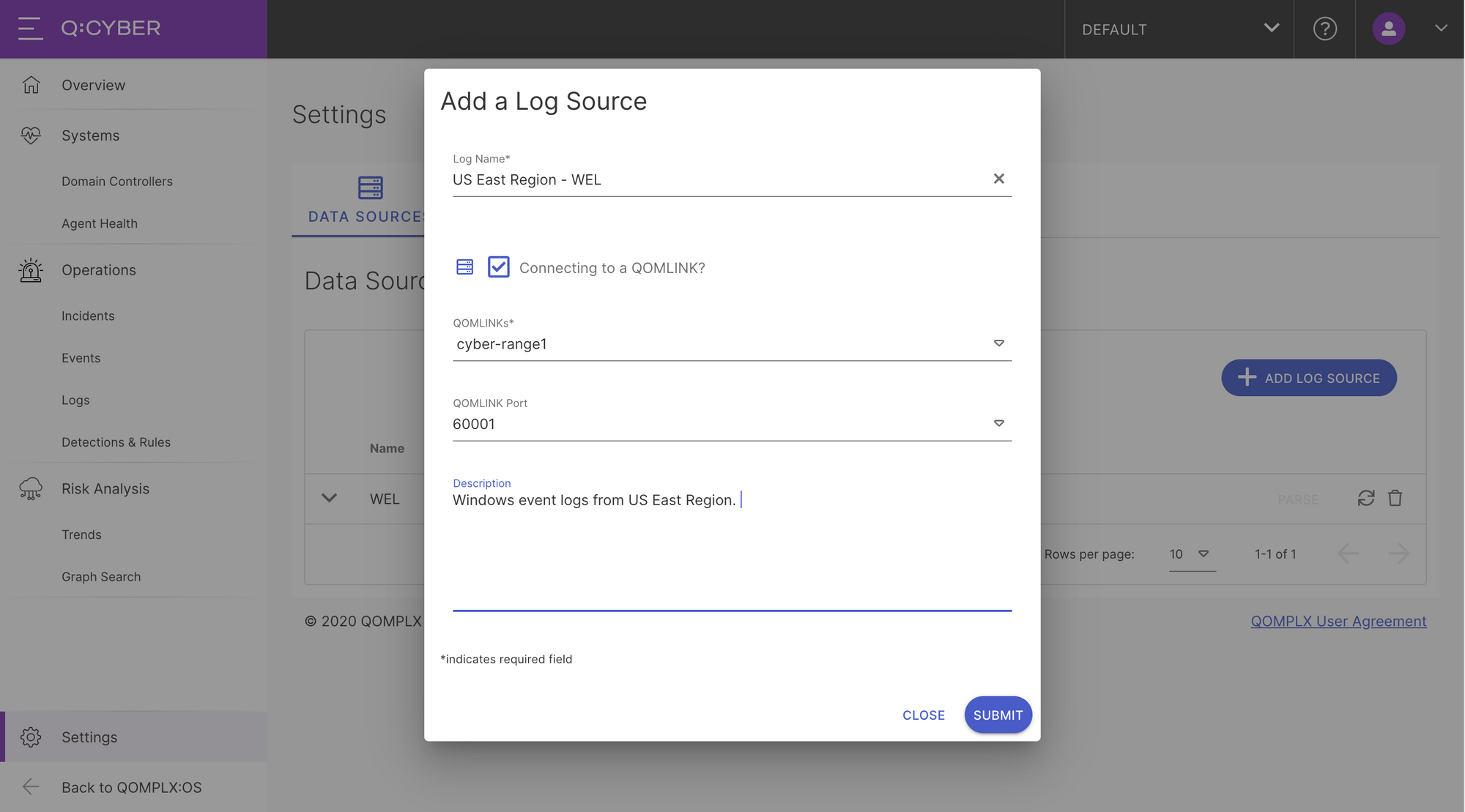

To add a new source simply click on the Add Log Source button. This is where you can choose to either send logs directly to Q:CYBER over the Internet or through a QOMLINK Virtual Appliance (QLA). If you choose the former simply give the log source a name and optionally include a description before pressing submit. In this example we have a QLA deployed and would like to forward logs through that so we select “Connecting to a QOMLINK” option which activates the drop down menus. Select which QLA you want to forward through, the destination port, optionally add a description and press submit.

Once you submit, a new Data Source entry will be listed in the table with an Awaiting Data status. The next step is to then configure the log forwarder to send logs to the QLA.

Forwarding Logs with NXLog

Q:CYBER is not dependent on any specific log forwarder. As long as the output format is supported by one of the many parsers in the platform you can use virtually any log forwarding software. Custom parsers can be supported if needed. One very popular choice is NXLog which offers a free, community edition log collector. Documentation on how to install NXLog on windows can be found here.

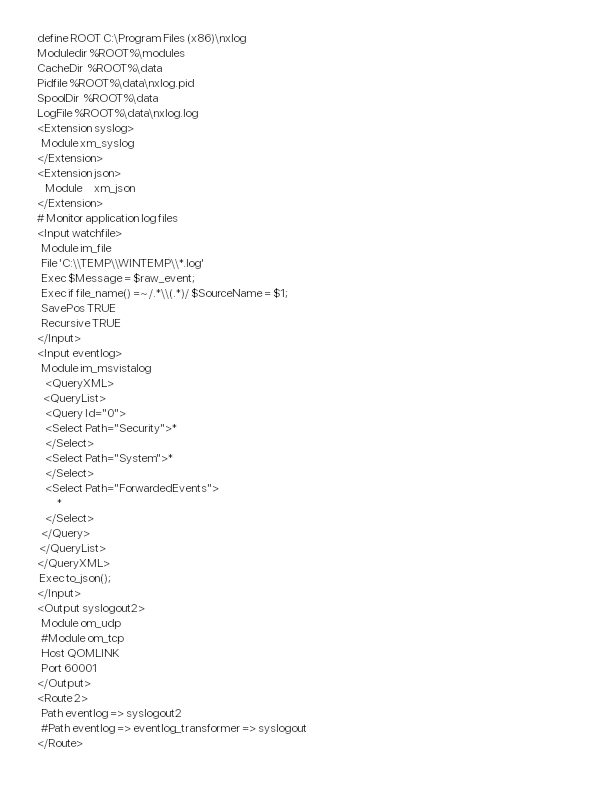

Once NXLog is installed the next step is configure the agent to forward logs to the QLA. During QLA deployment we recommend that our customers set a DNS record to easily reference the QLAs by hostname. In our lab we’ve configured the hostname and DNS record as QOMLINK.

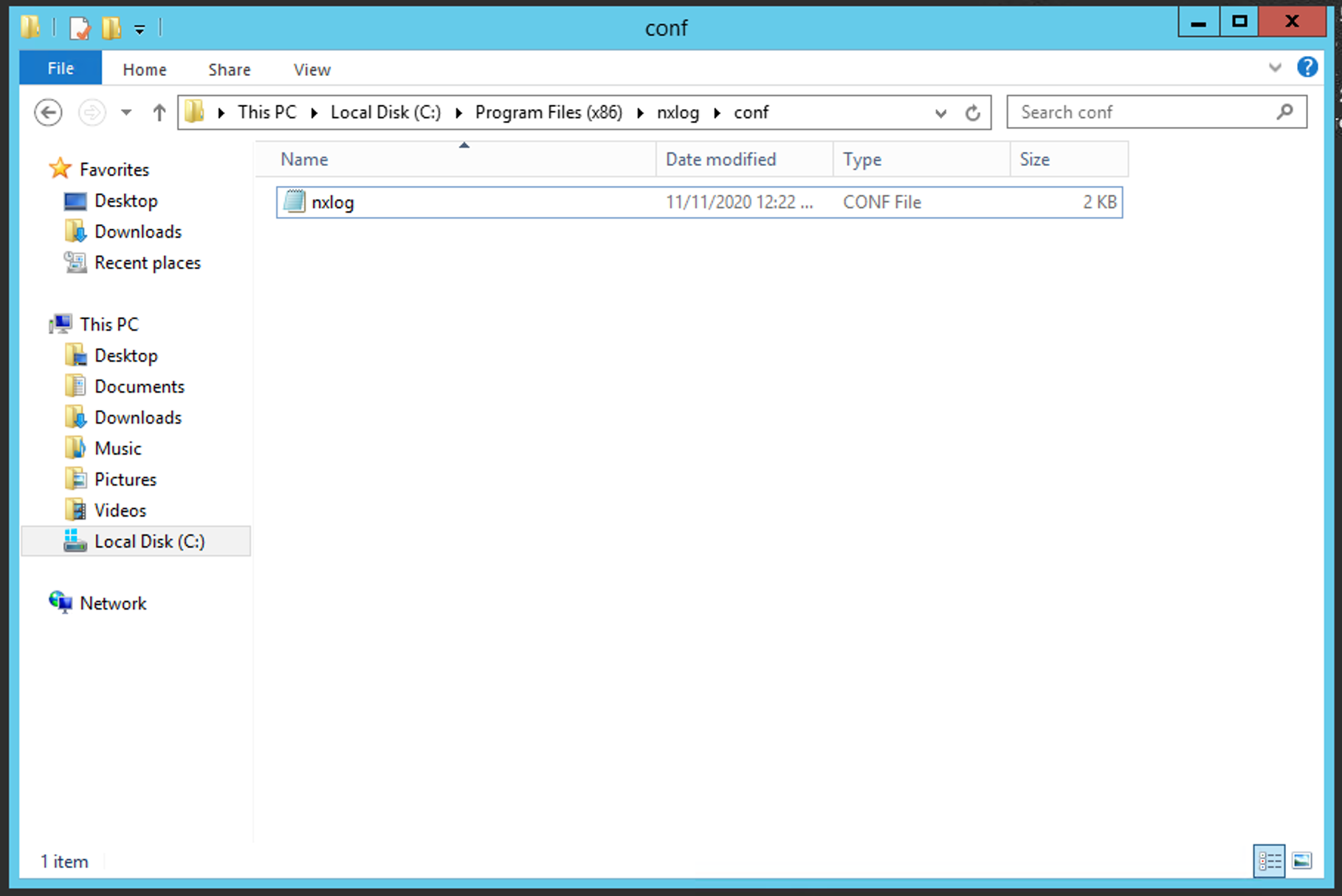

Next, open the configuration file located at:

C:\Program Files (x86)\nxlog\conf\nxlog.conf

You can use either the IP or the hostname in the NXLog configuration. To begin the log forwarding process we edit the “Output syslogout2” section in the nxlog.conf by adding:

- Host QOMLINK

- Port 6001

Also notice that we’re sending logs using UDP as specified by Module om_udp

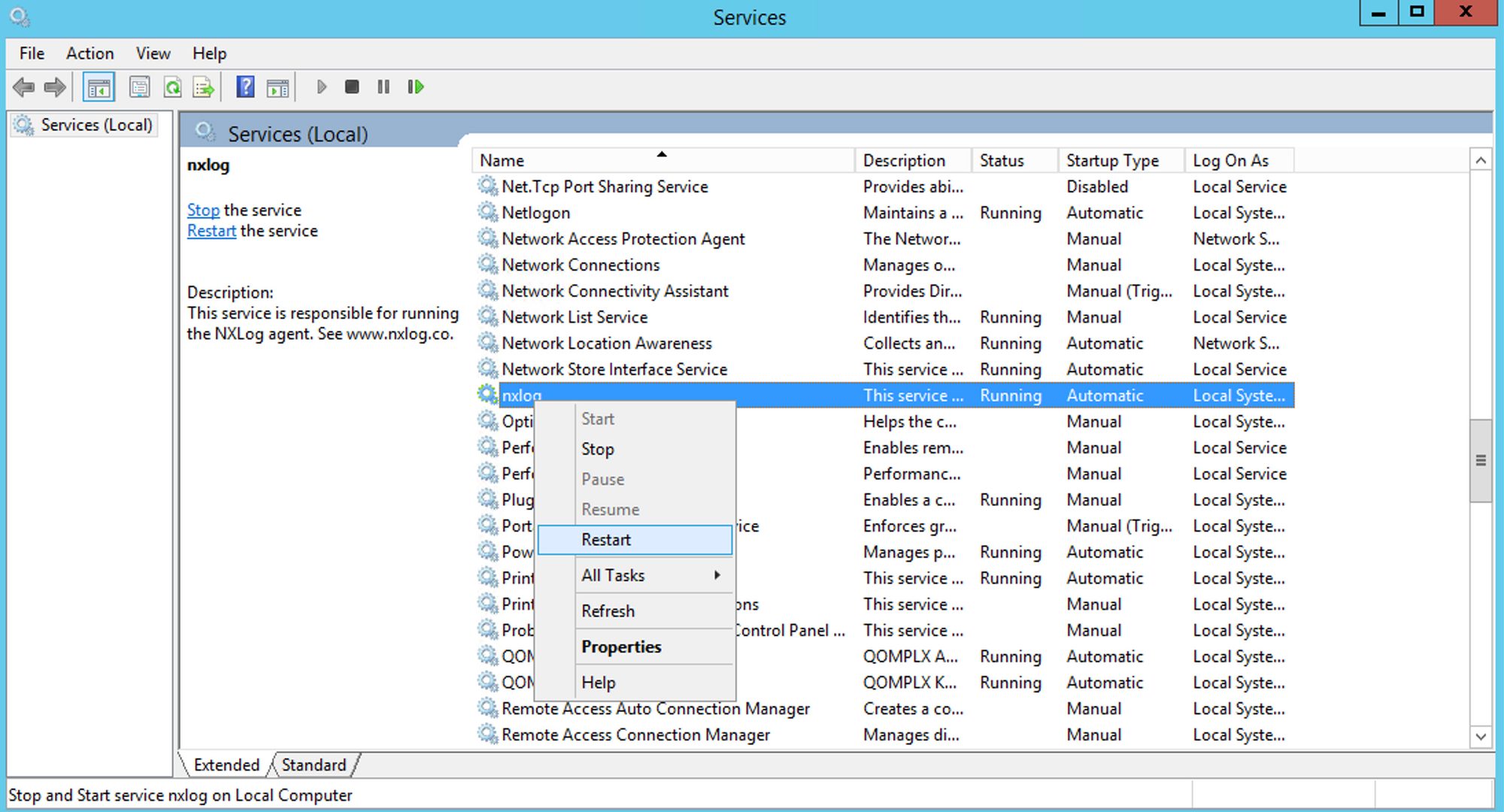

Once the config is saved, simply restart the nxlog server in the service manager for the changes to take effect.

Parsing Logs

The final step for WEL ingest is to configure parsing in Q:CYBER for your new data source. Once logs are successfully forwarded to Q:CYBER, the data source status will change to Ready To Parse and the Parse button will be available.

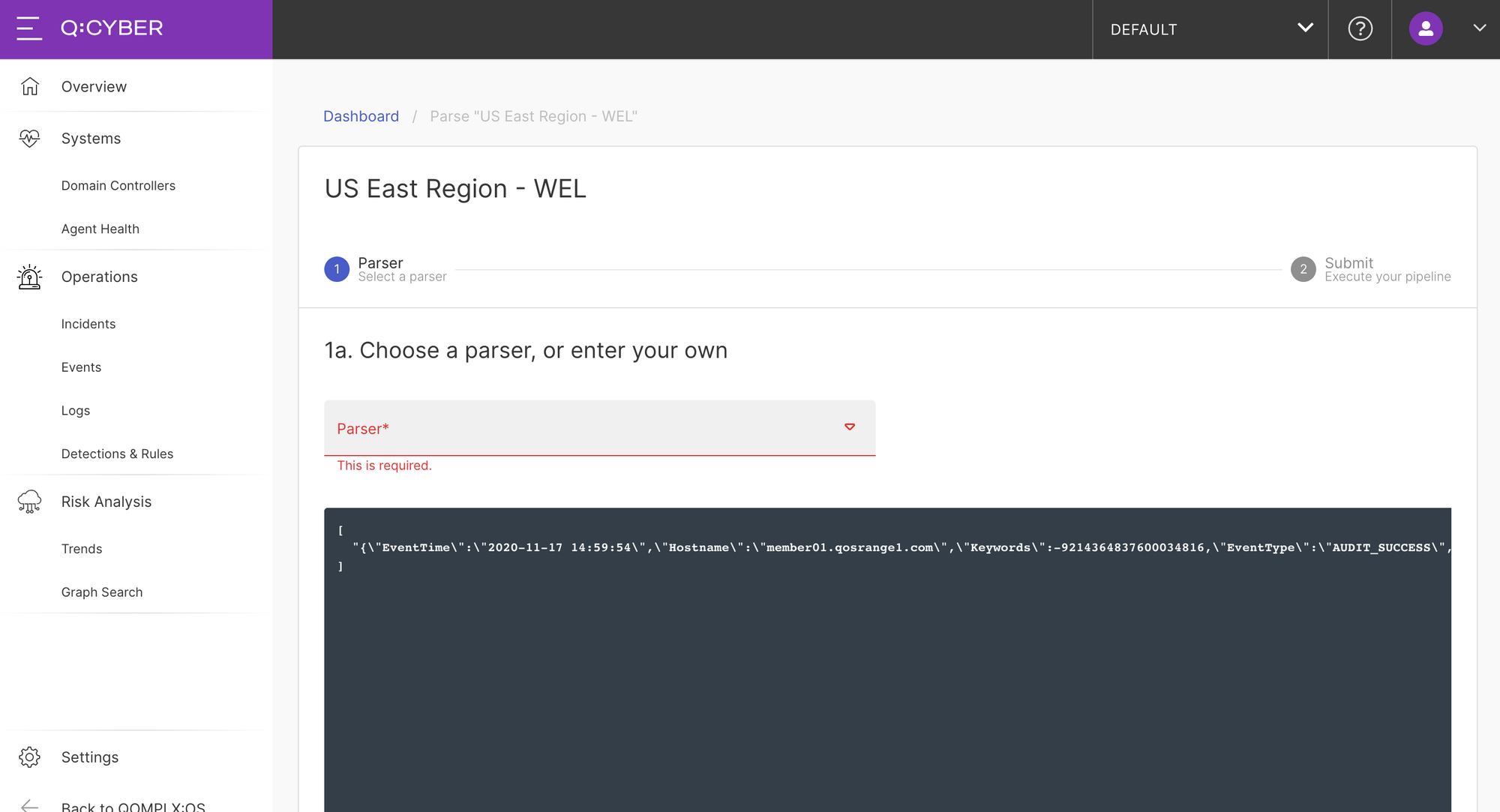

Selecting parse will take you to the parser workflow. You might notice in the screenshot below that there is an example of raw logs sent by the NXLog agent.

Since our NXLog configuration was configured to send the event logs in JSON format we can select the JSON Flattener parser from the dropdown list.

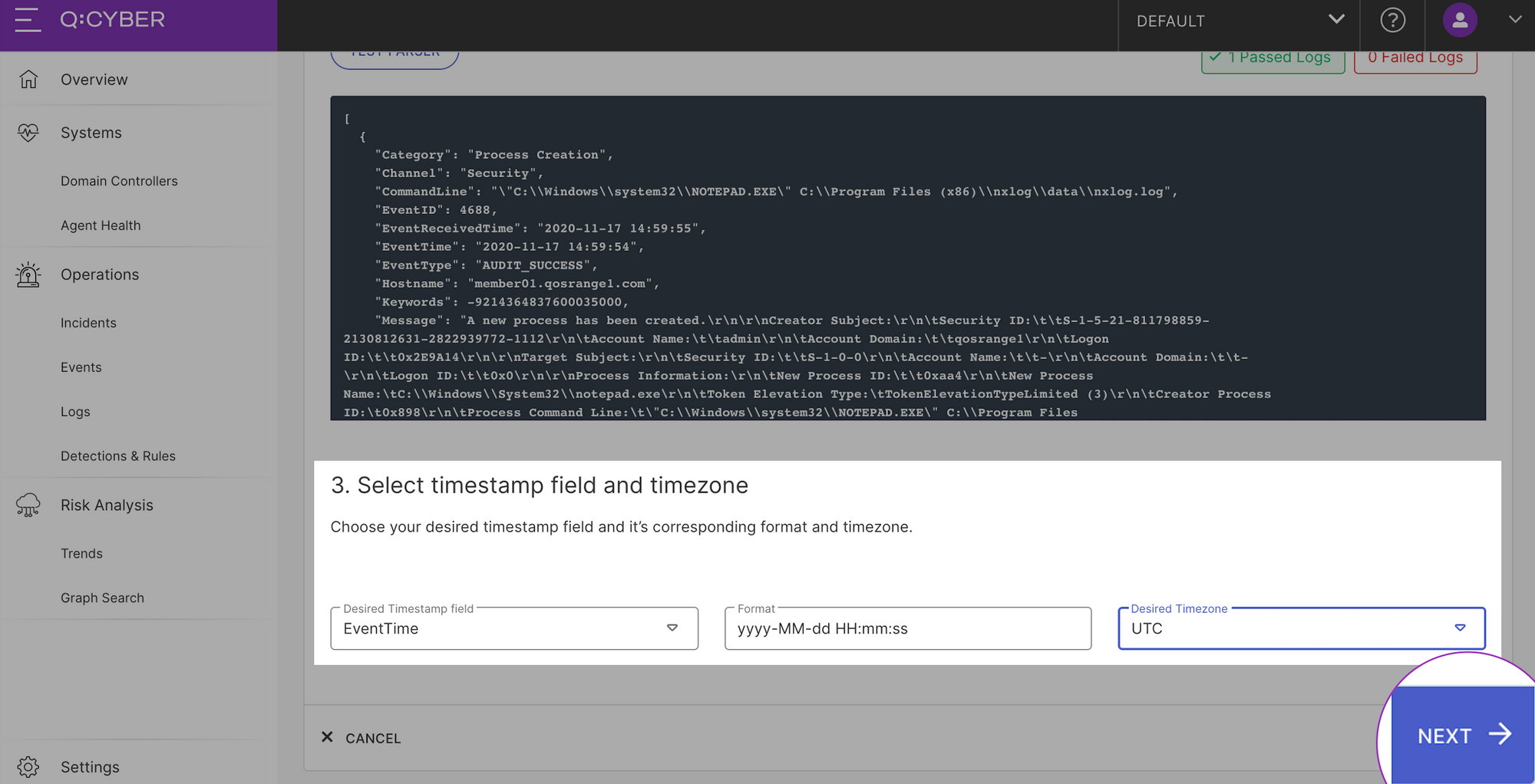

Selecting the Test Parser button will apply the parser to the existing logs and show you if there are any logs that will not pass parsing. Then specify the log event time field, format, and timezone mapping.

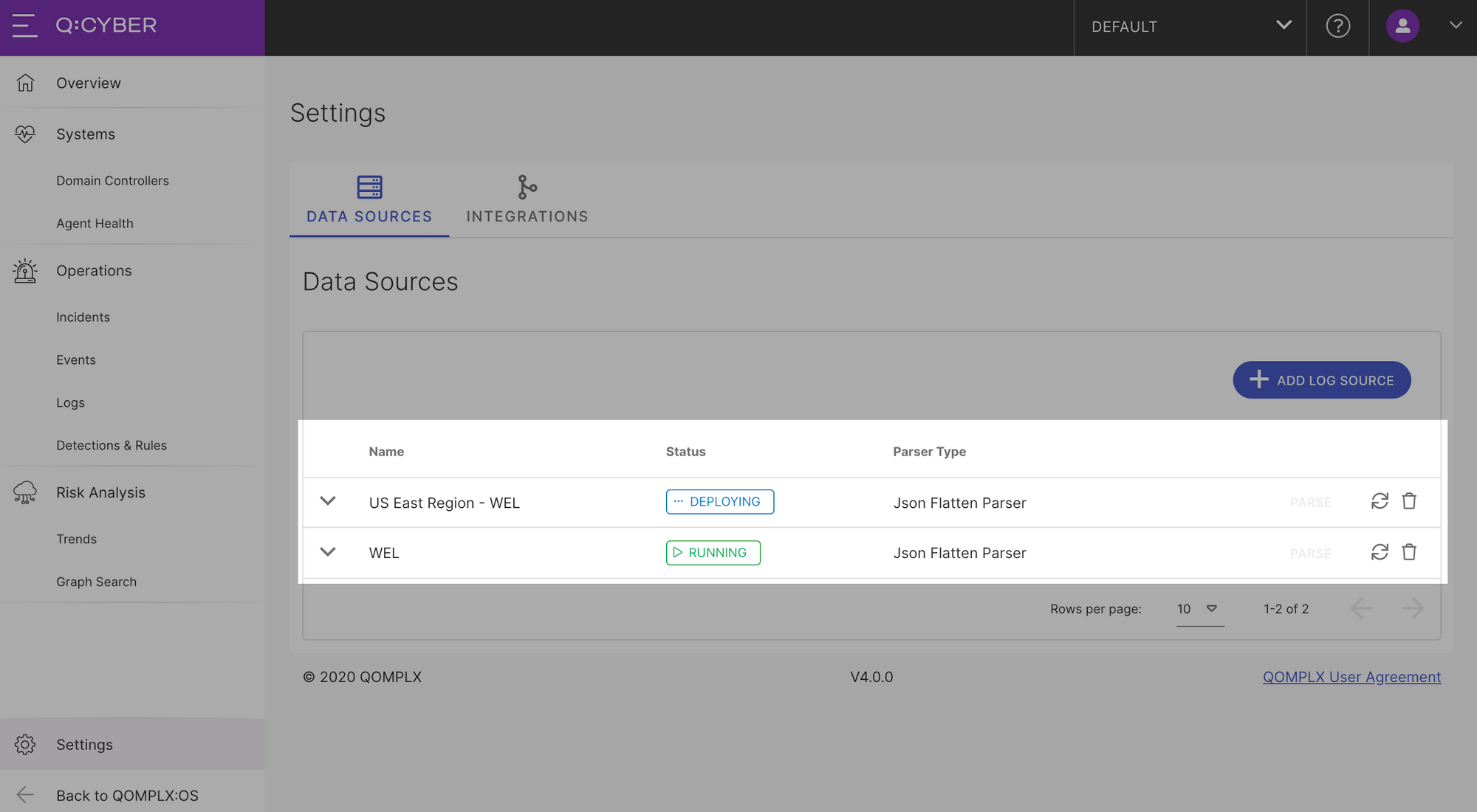

Selecting Next will bring you to the overview and confirmation page. If you don’t want to change anything, then select Next and you're done! Q:CYBER will dynamically deploy your new parser and apply it to all existing and subsequent logs in the stream.

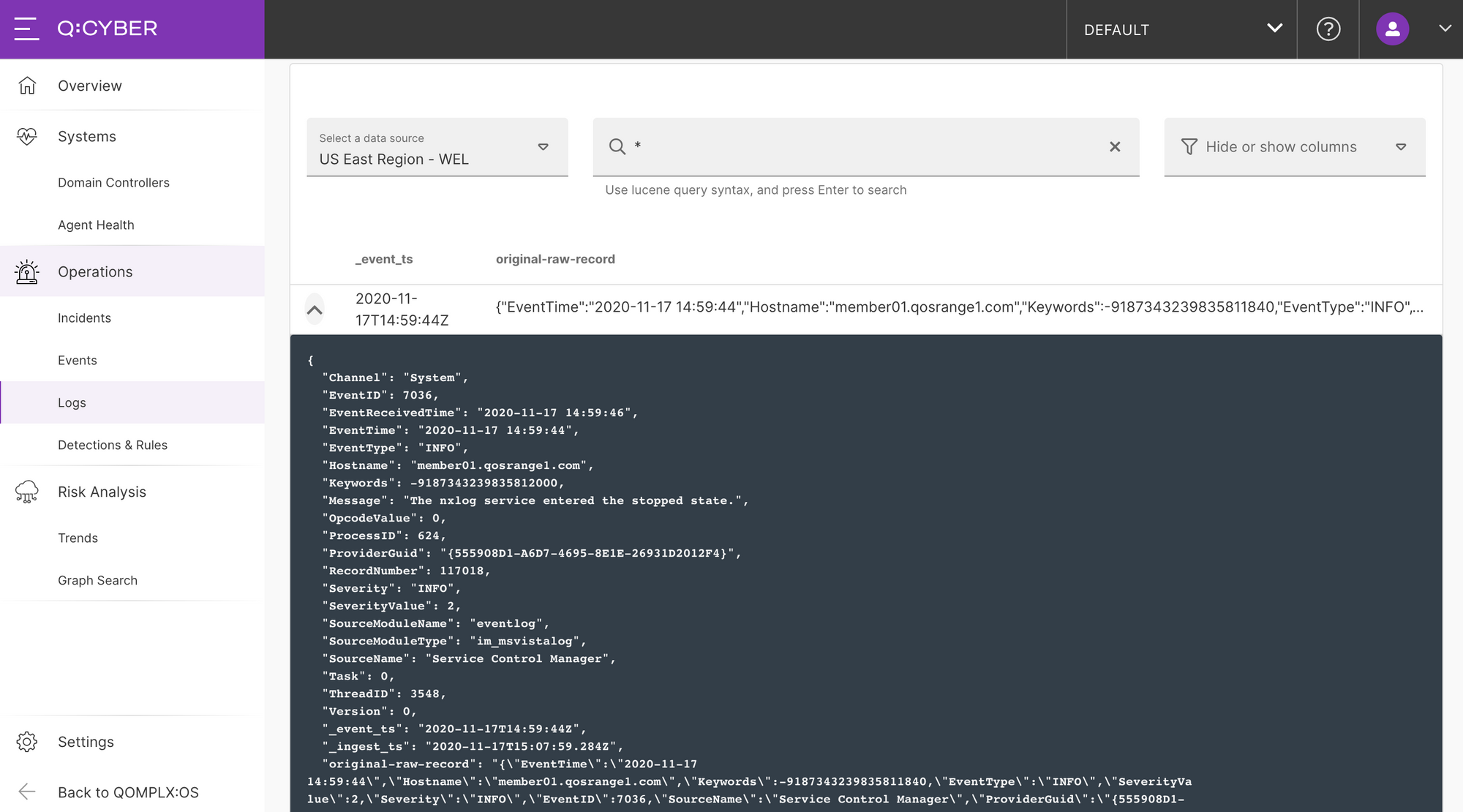

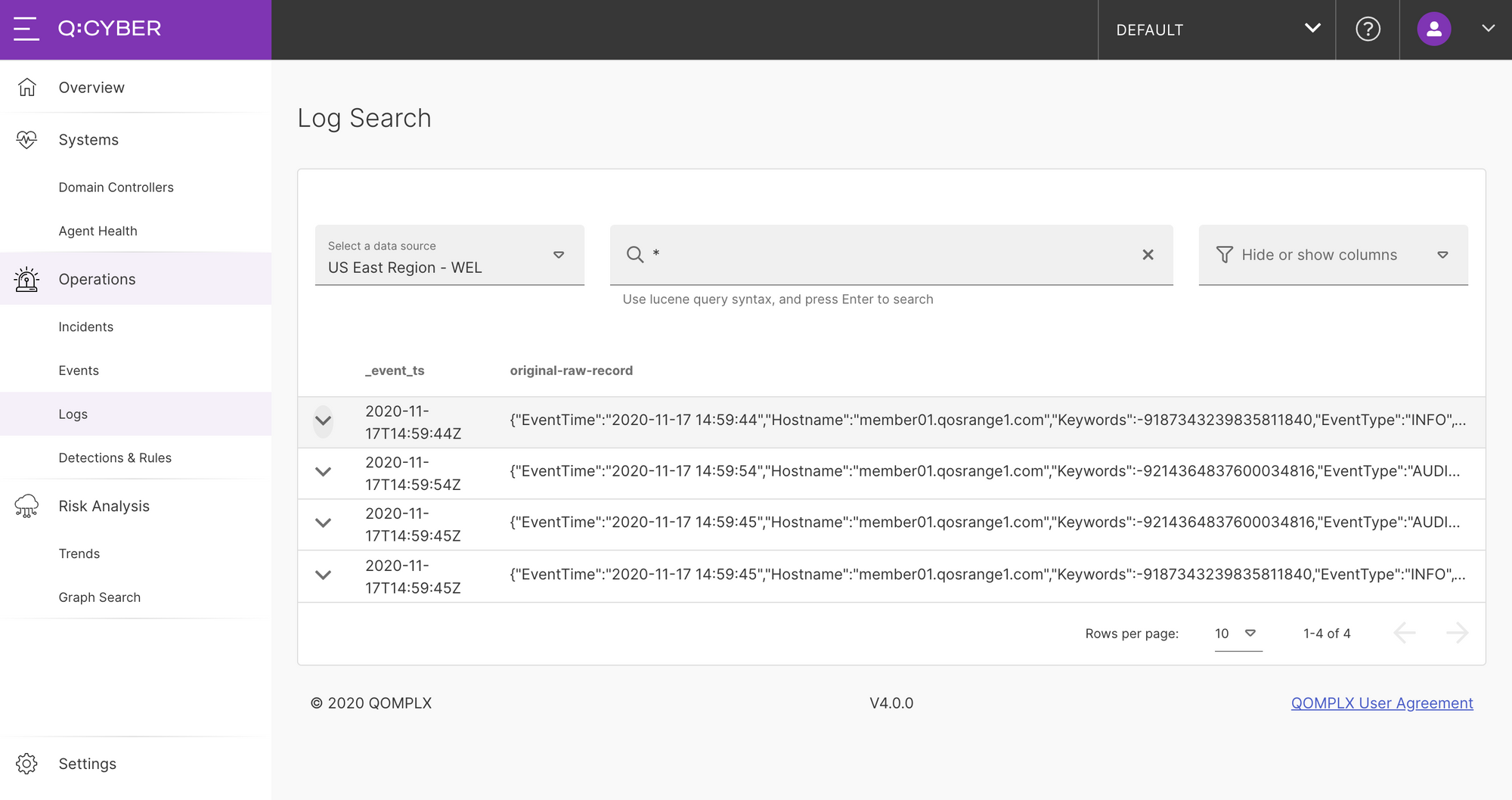

Searching Logs

As soon as logs are ingested and parsed, Q:CYBER begins indexing that data for search as shown below.

Now that a log source is flowing into Q:CYBER we can begin to do more interesting things with the data like write streaming detections.